A lot has been happening in the software testing universe lately – in part, thanks to the improvements in AI technologies; in part, because of the shifting software engineering practices that call for breaking old silos and making larger groups of people (not only QA!) responsible for software quality.

What is Intelligent Test Automation?

Intelligent Test Automation (ITA) is an approach to software test automation that utilizes “cognitive” technologies like machine learning (ML) and natural language processing (NLP) to improve testing efficiently.

Automating parts of the software testing process is nothing new. It has been done before. Different types of test automation were developed in the following chronological order:

Manual Testing > Test Automation > Intelligent Test Automation

In the beginning, software testing was done manually. Then QA engineers started to automate repetitive tasks with scripts. This was a big step towards saving time and getting software apps tested sooner.

However, automated tests have drawbacks:

- You need to maintain them over time. As the software changes, existing tests need to be updated and/or new tests need to be written.

- Auto-tests create false positives. Sometimes test failure is not caused by a software defect, but rather by a connectivity issue, an insignificant layout change, etc.

- A poor noise/signal ratio in test results. Depending on how many tests you run, you may get a large number of failed tests, but only a handful of them could be indicative of serious issues. That’s when weeding through test results and prioritizing issues becomes difficult.

ITA (intelligent test automation) holds the promise of potentially overcoming the above mentioned limitations. Here is how.

- Smart tests are updated and created automatically.

- Smart tests know false positives from true positives.

- Smart tests can intelligently prioritize issues.

And, in fact, they can do much more than that.

ITA use cases

There are potentially lots and lots of ways of implementing ITA. However, there are certain areas where ITA is traditionally used.

1. Test authoring

AI can automatically create test cases from user stories. It can do so by extracting the intent in user stories – for example, this story is about checkout, and this one is about authentication – and either map user stories to tests, or generate test scenarios based on that data. As the last step, test cases can be automatically created from auto-generated test scenarios.

AI can also auto-generate test cases from software models, if you practice model-based test automation. First, a model is created for the application. The model is a skimmed-down representation of your app: it’s mostly business logic that may or may not contain some code. Finally, tests are generated based on the models you have. (Model-based test automation is usually contrasted with script-based automation, with the latter being traditional and widespread. Script-based automation can only be done by QA automation engineers, while model-based automation can be done by less tech-savvy people, if they have the right tools.)

2. Self-healing tests (maintenance)

Smart tests have the ability to stay up to date on their own. Such tests are said to have “self-healing” properties. AI can recognize changes in the tested system and adapt test execution accordingly, so that the tests don’t break.

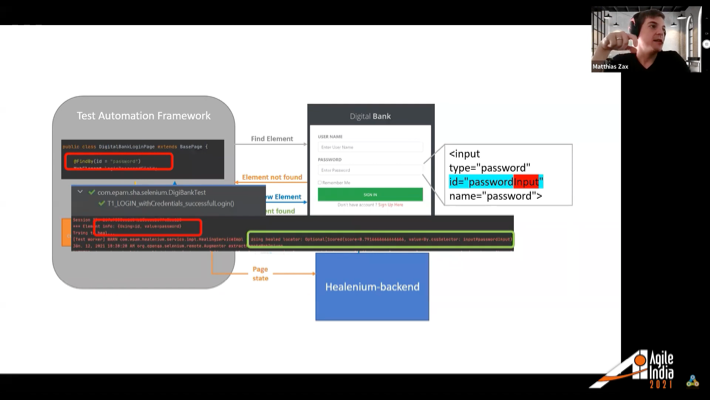

The way this works is as follows: AI models capture various data produced in the testing process and then learn off of these data. For example, a common issue with automated tests is NoSuchElementException. CSS selectors may change, and some changes (like in the case with third-party APIs) may be outside of your control. Tools like Healenium (an open-source tool that improves the stability of Selenium-based tests) allow tests to “heal themselves” and stay operational.

3. Test optimization

Optimization means running fewer tests and/or running them faster. It also means predicting which tests are likely to fail.

Based on analytics from prior releases (which test cases had failed and why), AI can predict defects in newer versions. This saves the test manager a lot of time because analyzing the project’s bottlenecks based on failed tests is a part of their routine. It also helps the test lead / PM hold more productive retrospectives (where they discuss actual failures) and planning meetings (where they try to get accurate estimates to ensure a smooth release).

Using impact-based optimization (mapping test cases to changes in app classes/methods), you can optimize up to 80% of test cases. You simply look at what classes/methods have changed between releases and what related test cases were affected. As a result, you can reduce the number of tests you need to run dramatically. However, this approach can only work when your developers follow the development and the release process to a T.

Sometimes, you get compensating errors (when an error is canceled out by a following error), resulting in a “passed” test result. This can happen for a number of reasons, for instance, the test engineer may have made a mistake while creating the test. You may not see the error because the test hasn’t failed, but you would, if you looked into the details of how it was run. Again, AI can help you identify instances like this.

Plus, AI can learn off of historical data and assign risk categories to test cases automatically based on that. This is called risk-based test optimization. Risk-based optimization is also a part of the test manager’s daily work routine.

4. Test analytics

QA is hell-bent on prevention over intervention. When you perform the root-cause analysis of what caused a particular defect, AI can help you see non-obvious patterns like “whenever we introduce changes to this piece of functionality, features C and F break.” This is just one example of how test analytics can be used in ITA to draw important conclusions.

The push for Quality Engineering (QE)

In the recent five years or so, with the adoption of DevOps and cross-functional teams, a lot of teams are trying to do quality engineering (QE) rather than quality assurance (QA).

The difference between QE and QA lies in the fact that quality is created, and not assured. Sometimes this is called the “shift-left” approach, which means that you are moving testing to an earlier stage in the software development life cycle by introducing unit tests, test-driven development, and other initiatives that make programmers responsible for software quality, too.

Also, by mapping test outcomes to specific code changes, you can empower coders to identify bugs without the help of dedicated test engineers (however, you will still need someone to design and properly map those tests).

In a recent convo, Sauce Labs’ John Kelly said that modern testing frameworks like Selenium and Appium, while being great, are not sufficient to satisfy the velocity of today’s software releases. Nowadays, there are more people involved in testing than ever before: developers, DevOps – which establishes collective responsibility for software quality. However, this “collective responsibility” does not eliminate the need for QA – it simply tries to minimize manual work and make testing less time-consuming.

In conclusion

Intelligent test automation marks the next step in test automation as we know it. The promise is that it could mend certain persistent and annoying problems that are limiting the potential of automated tests (like outdated tests, false positives, etc.)

ITA is mostly reliant on new technologies like machine learning. It can help in many areas across the testing cycle: test creation, maintenance, test analytics, and so on.

Related Blogs

Long Distance Relationships, or How to Manage a Remote Team

LEARN MORE